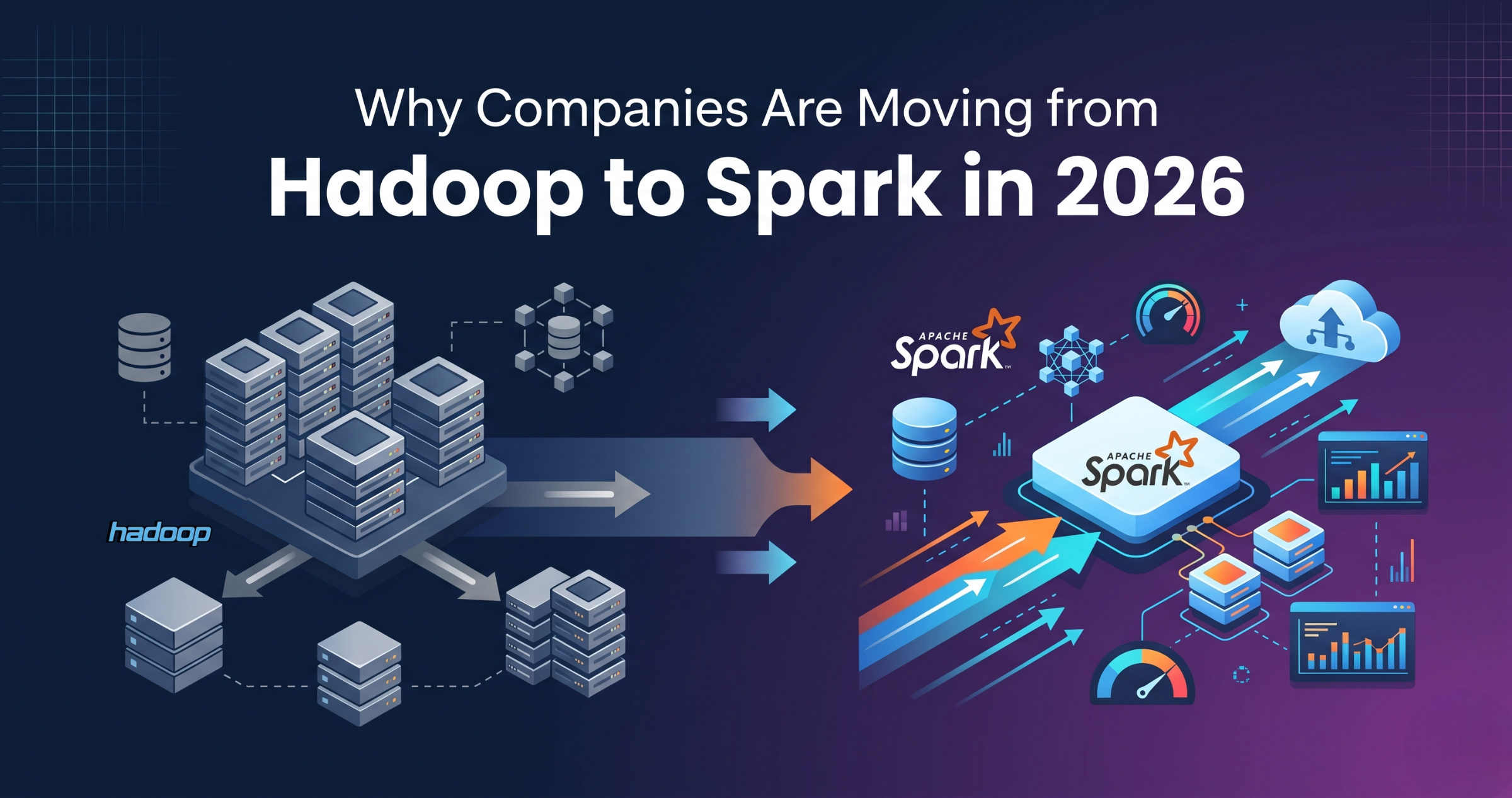

In today’s data-driven world, companies are handling massive amounts of data every day. Processing this data quickly and efficiently has become a major challenge. This is where Apache Spark comes in.

Apache Spark is one of the most popular tools used in data engineering for large-scale data processing. Many companies rely on Spark to build fast and scalable data pipelines.

If you are planning to start a career in data engineering, learning Apache Spark in 2026 is not just useful, it is essential.

What is Apache Spark?

Apache Spark is an open-source data processing framework used to process large amounts of data quickly. It works in a distributed environment, which means it can process data across multiple machines at the same time.

In simple terms, Spark allows you to handle big data efficiently without waiting for long processing times.

Unlike traditional systems, Spark processes data in memory, making it much faster. It supports multiple programming languages like Python, Scala, and SQL, making it flexible for different users.

Why Apache Spark is Important in Data Engineering

Data engineering is all about building systems that handle large data. Spark plays a key role in this because it can process huge datasets quickly and reliably.

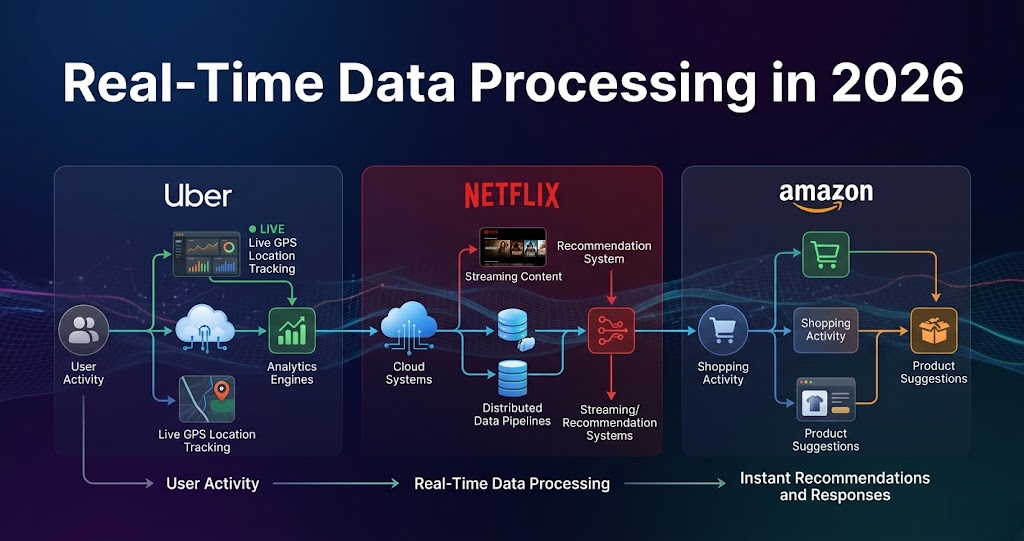

Many modern data pipelines depend on Spark for transforming and analyzing data. Whether it is batch processing or real-time data, Spark can handle both.

As companies continue to generate more data, the need for tools like Spark is increasing.

Key Features of Apache Spark

Apache Spark provides several features that make it powerful and widely used.

- Fast processing due to in-memory computation

- Supports batch and real-time processing

- Works with large-scale distributed systems

- Easy integration with cloud platforms like AWS and Azure

- Supports SQL, machine learning, and streaming

These features make Spark a complete solution for data processing.

How Apache Spark Works

Apache Spark works by dividing data into smaller parts and processing them across multiple machines. This approach is called distributed processing.

Instead of processing data in a single system, Spark distributes the workload. This reduces processing time and improves performance.

It uses components like:

- Spark Core for basic processing

- Spark SQL for structured data

- Spark Streaming for real-time data

- MLlib for machine learning

This modular design makes Spark flexible for different use cases.

Why Spark is a Must-Have Skill in 2026

There are several reasons why learning Apache Spark is important in 2026.

First, it is widely used in the industry. Many companies use Spark as a core part of their data systems.

Second, it offers strong career opportunities. Data engineers with Spark skills are in high demand.

Third, it supports modern data architectures. Tools like Delta Lake, Snowflake, and cloud platforms work well with Spark.

Fourth, it improves your ability to handle big data problems. This is a critical skill in today’s job market.

Because of these reasons, Spark is considered a must-have skill for data engineers.

How to Start Learning Apache Spark

If you are a beginner, you can start learning Spark step by step. You do not need to learn everything at once.

Start with:

- Basic SQL and Python

- Understanding data processing concepts

- Learning PySpark (Spark with Python)

- Practicing small projects

Once you understand the basics, you can move to advanced topics like optimization and real-time processing.

Common Mistakes Beginners Make

Many beginners make mistakes while learning Spark. Being aware of these can help you avoid problems.

- Trying to learn advanced concepts too early

- Ignoring fundamentals like SQL

- Not practicing real-world scenarios

- Focusing only on theory

Learning step by step with practice is the best approach.

Apache Spark is one of the most important tools in data engineering. It helps process large data efficiently and supports modern data systems.

In 2026, companies are increasingly relying on Spark for building scalable and fast data pipelines. This makes it a valuable skill for anyone entering the data field.

If you want to build a strong career in data engineering, learning Apache Spark is a smart and necessary step.