Data engineering is one of the fastest-growing fields in technology. Companies today depend on data to make decisions, build products, and improve performance. Because of this, data engineers play a key role in building systems that collect, process, and store data.

If you want to become a data engineer in 2026, learning the right tools is very important. There are many tools available, but you do not need to learn everything. You just need to focus on the most important tools used in real-world projects.

In this blog, you will understand the top data engineering tools you must learn, from beginner level to advanced level.

Why Learning Data Engineering Tools is Important

Data engineering is not only about theory. It is about building real systems that work with large amounts of data.

Tools help you:

- Build data pipelines

- Process large datasets

- Store and manage data

- Work with cloud platforms

- Improve performance and scalability

Without tools, it is difficult to work in real projects. That is why learning tools step by step is important.

Beginner-Level Tools

If you are starting from scratch, you should first focus on basic tools. These will help you understand core concepts.

SQL

SQL is the most important skill for data engineers. It is used to query and manage data in databases.

You will use SQL in almost every project. Without SQL, it is very difficult to move forward in data engineering.

Python

Python is widely used for data processing and automation. It is simple to learn and very powerful.

You can use Python for:

- Data transformation

- Writing scripts

- Working with APIs

Basic Databases

Understanding how databases work is important. You should learn:

- MySQL or PostgreSQL

- Basic data storage concepts

- Tables, indexes, and queries

These tools help you build a strong foundation.

Intermediate-Level Tools

Once you understand the basics, you can move to intermediate tools that are used in real data pipelines.

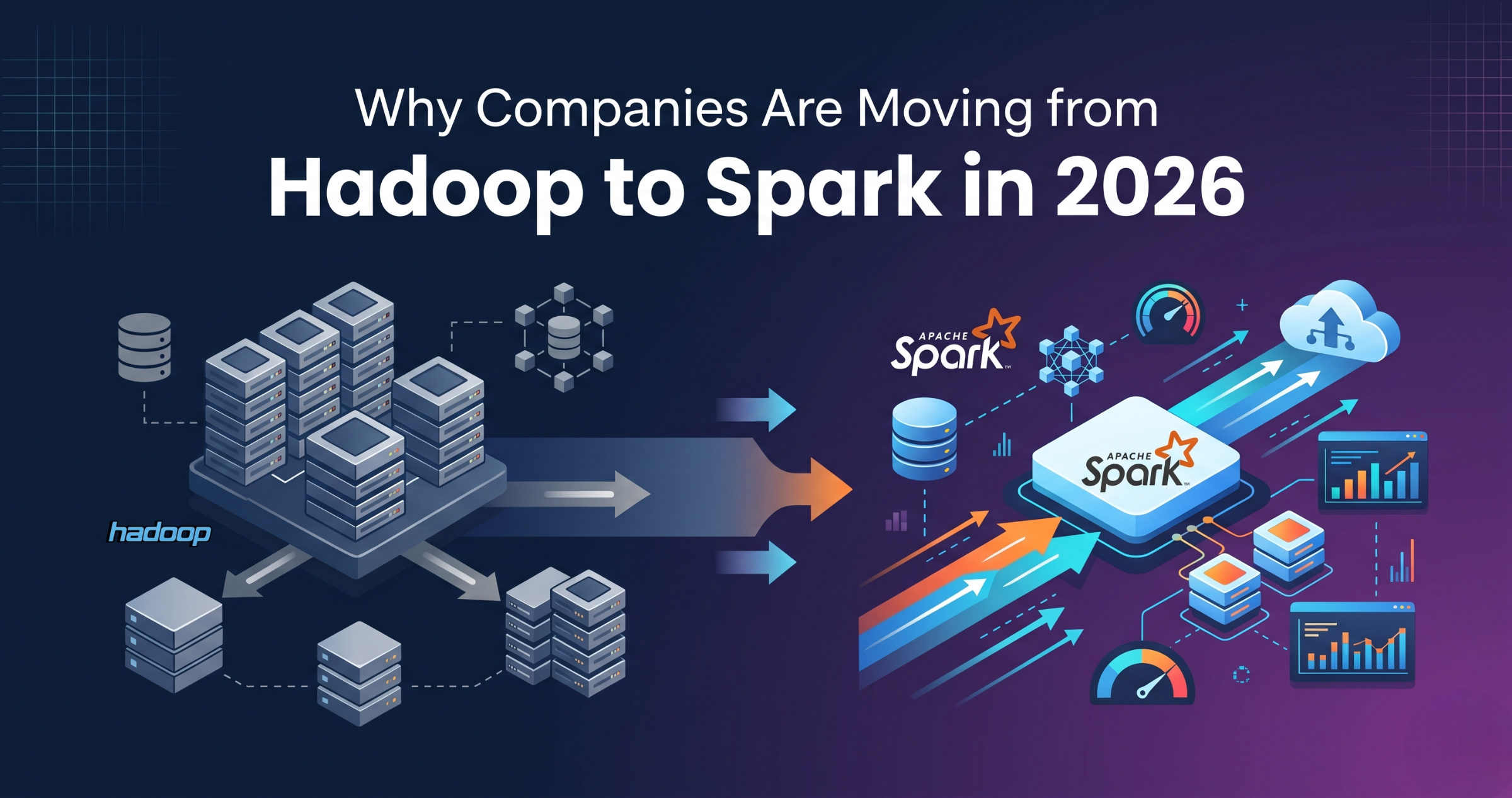

Apache Spark

Apache Spark is used for processing large amounts of data quickly. It supports distributed computing and is widely used in companies.

It helps in:

- Batch processing

- Real-time data processing

- Large-scale data transformation

Data Warehouses

Data warehouses are used to store processed data for analysis.

Popular tools include:

- Snowflake

- Amazon Redshift

- Google BigQuery

These tools are important for analytics and reporting.

ETL Tools

ETL tools help move and transform data from one system to another.

Examples:

- AWS Glue

- Azure Data Factory

- Apache Airflow

These tools help automate data pipelines.

Advanced-Level Tools

At the advanced level, you will work with modern data architecture tools.

DBT (Data Build Tool)

DBT is used for transforming data inside data warehouses. It allows you to write SQL-based transformations.

It is widely used in modern data engineering workflows.

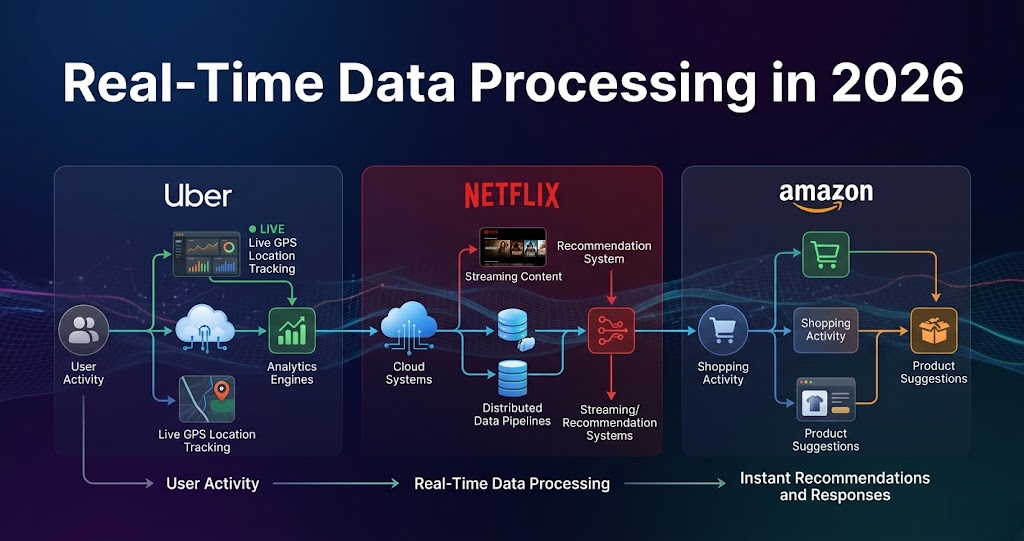

Streaming Tools

Streaming tools are used for real-time data processing.

Examples:

- Apache Kafka

- Spark Streaming

These tools are used in applications like real-time analytics and monitoring systems.

Cloud Platforms

Cloud platforms are essential for data engineering in 2026.

You should learn:

- AWS

- Azure

- Google Cloud

These platforms provide storage, processing, and data services.

How to Learn These Tools (Right Approach)

Many beginners make the mistake of trying to learn everything at once. This creates confusion.

Instead, follow this step-by-step approach:

- Start with SQL and Python

- Learn database concepts

- Move to Spark and ETL tools

- Learn cloud basics

- Then explore advanced tools like DBT and streaming

Practice is very important. Try to build small projects to understand how tools work together.

Common Mistakes to Avoid

While learning data engineering tools, avoid these mistakes:

- Learning tools without understanding basics

- Trying too many tools at once

- Not practicing real-world scenarios

- Ignoring data concepts

Focus on understanding how tools are used in real projects.

Data engineering tools are the backbone of modern data systems. In 2026, companies are using a combination of tools to build scalable and efficient data pipelines.

You do not need to learn everything at once. Start with basics, move step by step, and focus on real-world use cases.

By learning the right tools in the right order, you can build a strong career in data engineering.