Traditional data lakes aren’t enough to meet modern demands for data, because the field is evolving so fast. Today’s organizations need systems that can handle real-time processing, ensure data reliability, and scale efficiently. Here’s where Delta Lake is a game-changer. More companies than ever are switching from data lakes to data warehouses in 2026 since they combine the flexibility of data lakes with the reliability of data warehouses.

What is Delta Lake and Why It Matters

With Delta Lake, you can add ACID transactions, schema enforcement, and time travel capabilities to existing data lakes. Unlike traditional data lakes, Delta Lake ensures that data is accurate and reliable. Because of this, it’s a vital part of modern data architectures, especially for companies that deal with a lot of data.

Key Reasons Behind the Shift to Delta Lake

Delta Lake’s ACID support is one of the main reasons it’s so popular. Traditionally, data lakes can get corrupted by concurrent writes and failures. All operations in Delta Lake are atomic and consistent, so you don’t have to worry about data reliability.

Users can also access previous versions of data with its time travel feature. You can use this to debug data pipelines, audit changes, and recover from errors without losing data. In data lake environments, it’s hard to get control and transparency.

Adoption is also driven by schema enforcement and evolution. By preventing invalid or unexpected data from being written, Delta Lake reduces pipeline breaks. It’s flexible enough to handle changing business requirements, while allowing schemas to evolve over time.

Performance improvements make it even more popular. The speed and efficiency of queries are greatly improved by features like data skipping, file compression, and optimization techniques. By using a single system for multiple use cases, Delta Lake can handle batch and real-time workloads, simplifying data architecture for organizations.

Real-World Applications

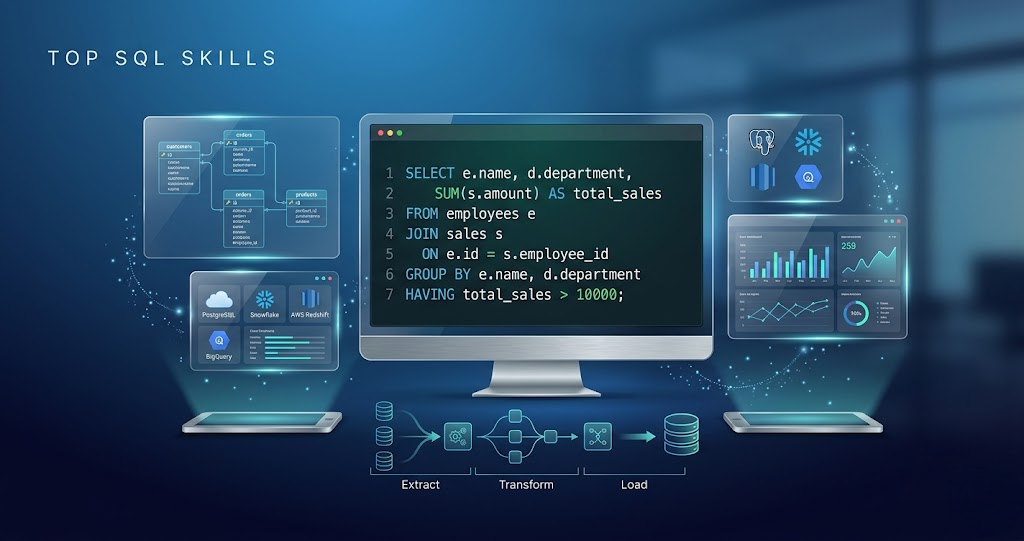

Across industries, Delta Lake is used for building scalable and reliable data solutions. Real-time analytics, fraud detection systems, machine learning pipelines, and large-scale ETL processes use it. Streaming and batch data can be handled simultaneously while maintaining data consistency.

Why You Should Learn Delta Lake in 2026

Delta Lake has become an essential skill for data engineers as companies adopt modern data architectures. With cloud platforms like AWS, Azure, and GCP, it integrates seamlessly with tools like Apache Spark. The Delta Lake course not only enhances your technical skills, but makes you more marketable.

Delta Lake is a significant advancement in data engineering, addressing many of the limits of traditional data lakes. Providing reliable, high-performance, and scalable data processing, it’s a foundational technology in modern data systems. A growing adoption of Delta Lake in 2026 shows it’s not just a trend, but a long-term shift in data platforms.