Delta Lake Explained in Databricks – Features, Architecture, and Use Cases

Introduction

Trying to understand Delta Lake in Databricks but getting confused how it is used in real data engineering projects?

You’re not alone.

Most people:

- Learn what Delta Lake is

- Hear about ACID transactions

- Learn Databricks basics

But when asked why Delta Lake is needed and how it solves real problems, they get stuck.

Because knowing features is not equal to understanding how data is managed in real pipelines.

In this blog, you’ll understand:

- What Delta Lake is

- Why it is used

- How it works in Databricks

- Step-by-step real pipeline flow

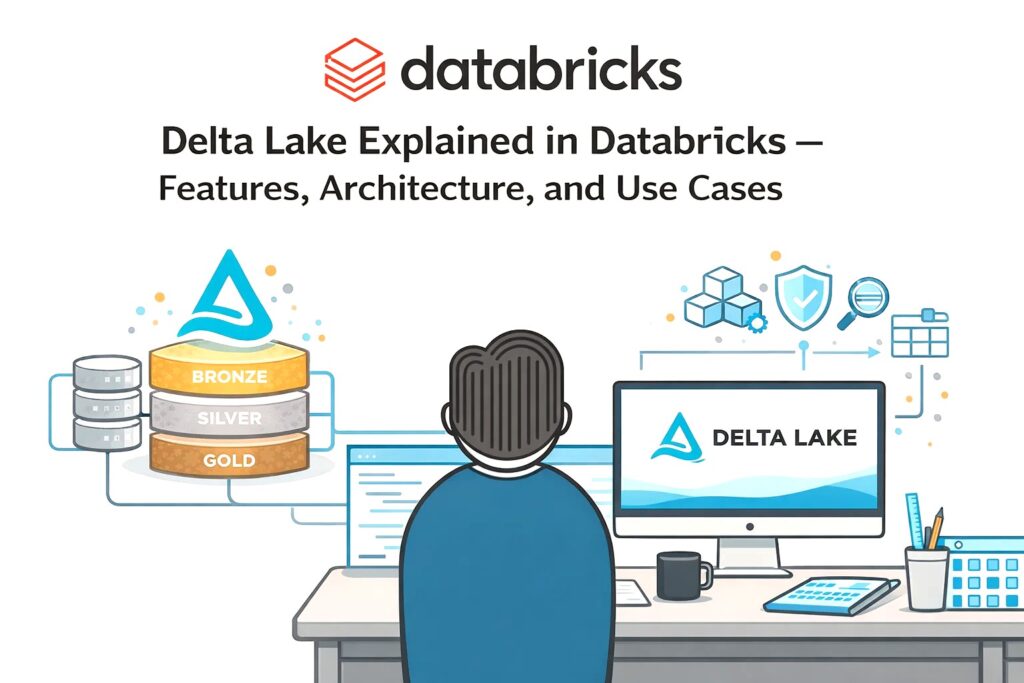

What is Delta Lake?

Delta Lake is a storage layer built on top of data lakes.

It improves data reliability and performance.

In simple terms:

Delta Lake makes data lakes behave like databases.

Delta Lake adds reliability, consistency, and performance to data stored in data lakes.

Why Delta Lake is Needed

Problems without Delta Lake:

- Data inconsistency

- No transactions

- Difficult updates

- Poor performance

Delta Lake solves these issues.

Key Features of Delta Lake

ACID Transactions

Ensures reliable data operations

Schema Enforcement

Prevents invalid data

Schema Evolution

Allows schema changes

Time Travel

Access previous versions of data

Data Versioning

Tracks changes over time

Step-by-Step Delta Lake in Databricks

Step 1: Data Storage (Raw Data)

Data is stored in data lake:

- S3

- Azure Data Lake

Initially stored as files.

Step 2: Convert to Delta Format

Data is converted into Delta format.

This adds:

- Metadata

- Transaction logs

Step 3: Data Processing

Databricks processes data:

- Reads Delta tables

- Applies transformations

- Writes back to Delta

Step 4: Manage Updates

Delta Lake allows:

- Insert

- Update

- Delete

Without rewriting full data.

Step 5: Version Control

Every change is tracked.

You can:

- Access old data

- Rollback changes

Step 6: Query Optimization

Delta improves performance:

- Faster reads

- Efficient queries

How Delta Lake Fits in Data Pipeline

Typical flow:

- Data ingested into data lake

- Converted to Delta format

- Processed using Databricks

- Stored as Delta tables

- Used for analytics

Real-World Example

E-commerce pipeline:

- Orders data stored in data lake

- Converted to Delta format

- Databricks processes data

- Updates handled easily

- Data used for reporting

Delta Lake vs Traditional Data Lake

Traditional Data Lake:

- Stores raw files

- No transactions

- Difficult updates

Delta Lake:

- Supports transactions

- Allows updates

- Ensures consistency

Common Mistakes

- Not converting data to Delta

- Ignoring schema enforcement

- Not using partitioning

- Poor file management