Starting data engineering from scratch can feel confusing, especially if you don’t know where to begin. There are many tools, technologies, and concepts, and most beginners feel overwhelmed. Many people start learning random tools without a clear path and end up getting stuck.

The good news is that you don’t need to learn everything at once. If you follow a clear step-by-step approach, you can start learning data engineering easily, even with no prior experience. This guide will help you understand exactly what to learn and how to begin your journey in 2026.

Understanding Data Engineering

Before learning any tools, it is important to understand what data engineering actually is. Data engineering is the process of collecting, transforming, and storing data so that it can be used for analysis.

In simple terms, data engineers build systems that move data from one place to another and make it ready for use. These systems are called data pipelines. Once you understand this basic idea, the rest of the learning process becomes much easier.

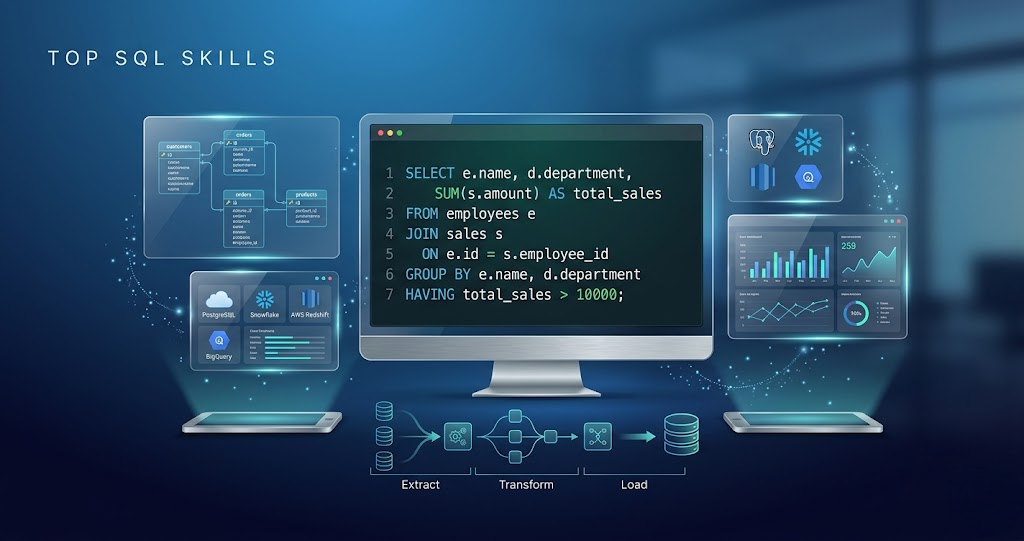

Start with SQL

SQL is the most important skill in data engineering. Almost every data engineer uses SQL daily to work with data. Without SQL, it becomes very difficult to move forward in this field.

You should focus on learning:

- Writing basic queries

- Filtering data using conditions

- Joining multiple tables

- Aggregating data using functions

Strong SQL skills will make learning other tools much easier.

Learn Basic Programming

After SQL, the next step is to learn basic programming. Python is the most commonly used language in data engineering. You don’t need advanced coding skills, but you should be comfortable with basic concepts.

Focus on understanding how to write simple programs, use functions, and work with data. Programming helps you build pipelines, automate tasks, and process data efficiently.

Understand Data Pipelines

Data pipelines are the core of data engineering. A pipeline is a system that takes data from a source, processes it, and stores it for analysis.

A simple pipeline usually follows this flow:

- Data is collected from a source

- Data is processed and cleaned

- Data is stored in a database or warehouse

You should also understand concepts like ETL (Extract, Transform, Load) and the difference between batch and real-time processing.

Learn Big Data Tools

Once you understand the basics, you can start learning tools like Apache Spark. These tools are used to process large amounts of data efficiently.

At the beginning, focus on understanding how data is read, transformed, and written using these tools. You don’t need to go deep immediately. Basic knowledge is enough to start.

Learn Cloud Basics

Most modern data engineering work happens on cloud platforms. It is important to learn at least one cloud platform such as AWS, Azure, or GCP.

You should understand basic services like:

- Storage systems

- Data processing tools

- Data pipeline services

Start with one platform and later expand your knowledge to others.

Build Small Projects

Learning theory alone is not enough. You need to build projects to understand how things work in real-world scenarios.

Start with simple projects like reading data from a file, cleaning it, and storing it in a database. Then move to building basic pipelines and using cloud tools. Projects help you gain confidence and practical experience.

Learn Real-World Concepts

After gaining basic knowledge, you should start learning real-world concepts that are used in production systems. These include data quality, error handling, partitioning, and performance optimization.

These topics help you understand how to build reliable and efficient data systems.

Practice Regularly

Consistency is the key to learning data engineering. You don’t need to study for long hours every day, but you should practice regularly. Even one to two hours daily can make a big difference.

Focus on improving your SQL, coding skills, and understanding of pipelines. Regular practice helps you retain concepts and improve faster.

Prepare for Jobs

Once you have learned the basics and built some projects, you can start preparing for job opportunities. Focus on understanding concepts, solving SQL problems, and explaining how data pipelines work.

It is also important to build a portfolio of your projects. This helps you showcase your skills and improves your chances of getting hired.

Common Mistakes to Avoid

Many beginners make mistakes that slow down their progress. Some of the most common mistakes include:

- Trying to learn too many tools at once

- Skipping fundamental concepts

- Not practicing enough

- Following tutorials without a clear plan

Avoiding these mistakes will make your learning journey much smoother.

Starting data engineering from scratch is not as difficult as it seems. The difficulty mostly comes from lack of direction, not from the field itself. If you follow a structured path and focus on basics, learning becomes much easier.

Start with SQL and programming, understand data pipelines, and gradually move to tools and cloud platforms. Stay consistent, build projects, and keep improving step by step. Over time, you will develop the skills needed to become a data engineer.