Data Quality Checks in Data Engineering – Rules, Examples

Introduction

Trying to understand data quality checks in data engineering but not sure what actually needs to be checked?

You’re not alone.

Most people:

- Learn ETL pipelines

- Learn tools like Spark and Glue

- Focus on processing

But ignore data quality.

And in real projects, bad data is a bigger problem than slow pipelines.

Because processing wrong data gives wrong results.

In this blog, you’ll understand:

- What data quality checks are

- Why they are important

- Types of checks used in real pipelines

- Where they fit in data engineering

Data quality checks are validations applied to data to ensure it is correct, complete, and reliable before processing or analytics.

Why Data Quality Checks are Important

- Prevent incorrect data

- Avoid wrong business decisions

- Maintain data consistency

- Improve pipeline reliability

Without data quality checks, pipelines produce invalid results.

Where Data Quality Checks Happen

In real pipelines, checks happen at multiple stages:

- Ingestion layer

- Processing layer

- Before loading into warehouse

Step 1: Schema Validation

Check if data matches expected structure.

Examples:

- Column names

- Data types

- Missing columns

If schema is wrong, pipeline should fail.

Step 2: Null Checks

Check for missing values.

Examples:

- id should not be null

- important fields should not be empty

Null values can break downstream processing.

Step 3: Duplicate Checks

Check for duplicate records.

Examples:

- Duplicate transactions

- Duplicate customer IDs

Duplicates create wrong analytics.

Step 4: Data Type Validation

Check if data types are correct.

Examples:

- Date column should be date

- Amount should be numeric

Wrong data types cause errors.

Step 5: Range Checks

Check if values fall within valid range.

Examples:

- Age should not be negative

- Salary should be within expected range

Step 6: Business Rule Validation

Check based on business logic.

Examples:

- Order amount should be greater than zero

- Status values should be valid

This ensures data correctness.

Step 7: Consistency Checks

Check data consistency across datasets.

Examples:

- Customer ID exists in master table

- Foreign key validation

Step 8: Data Freshness Check

Check if data is up to date.

Examples:

- Daily data should be available

- No missing partitions

Step 9: Record Count Validation

Check number of records.

Examples:

- Source vs target count

- Sudden drop in data

Helps detect data loss.

Step 10: Format Validation

Check data format.

Examples:

- Email format

- Date format

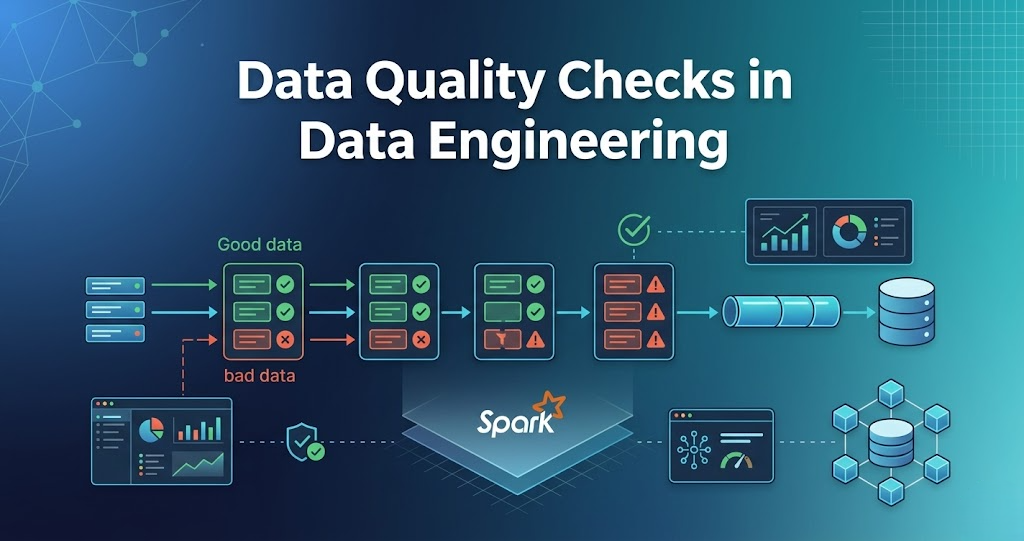

How Data Quality Checks Fit in Pipeline

Typical flow:

- Data ingested from source

- Schema validation

- Apply data quality checks

- Clean and transform data

- Load into storage

- Validate before analytics

Real-World Example

E-commerce pipeline:

- Orders data ingested

- Null checks applied

- Duplicate records removed

- Business rules validated

- Clean data stored

- Used for reporting

Common Mistakes

- Ignoring data quality

- Not failing pipeline on errors

- Not validating schema

- Checking only after processing